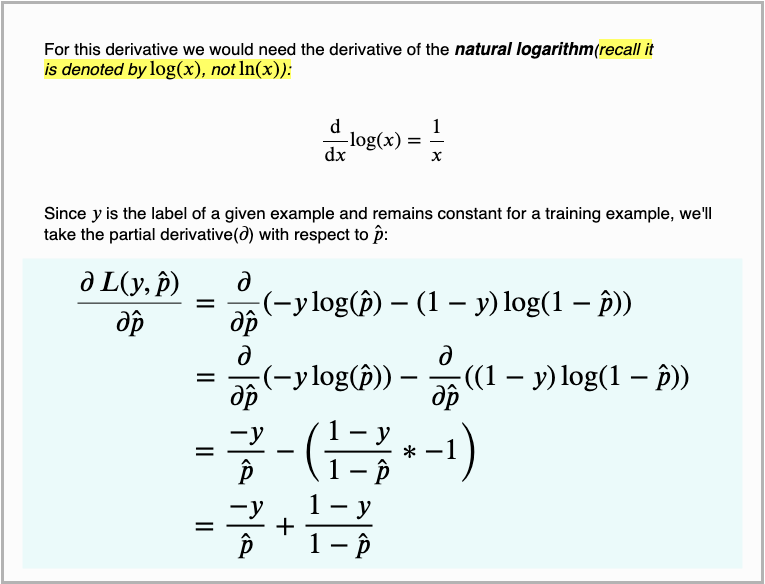

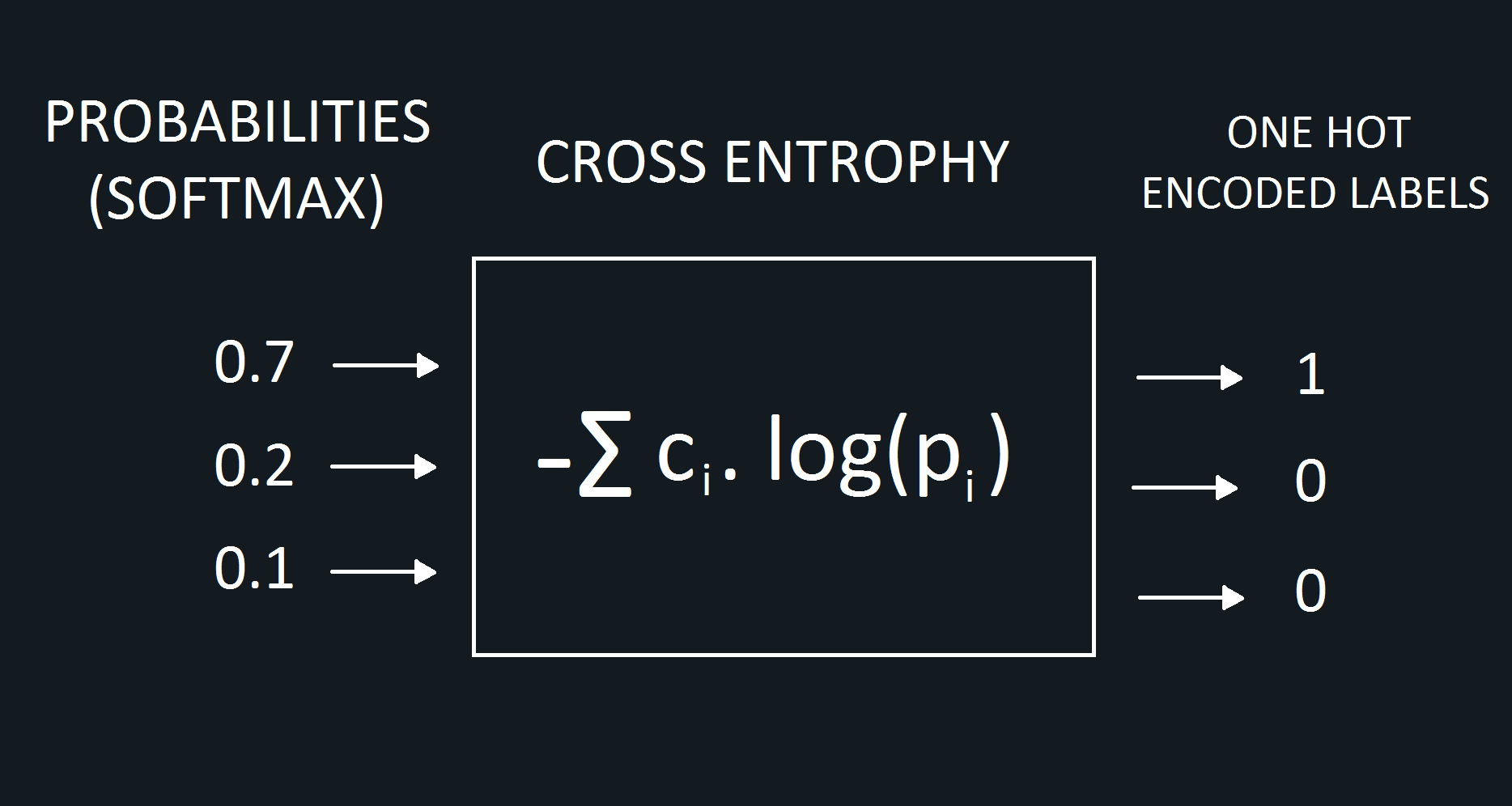

These are the dance moves of the most common activation functions in deep learning. p i = – c i + p i = p i – c iĪs seen, derivative of cross entropy error function is pretty. And the KullbackLeibler divergence is the difference between the Cross Entropy H for PQ and the true Entropy H. This is the Cross Entropy for distributions P, Q. ∂E/∂score i = – c i + c i . p i + p i – c i . The information content of outcomes (aka, the coding scheme used for that outcome) is based on Q, but the true distribution P is used as weights for calculating the expected Entropy. However, we’ve already calculated the derivative of softmax function in a previous post. Now, it is time to calculate the ∂p i/score i. Notice that derivative of ln(x) is equal to 1/x. Only bold mentioned part of the equation has a derivative with respect to the p i. Now, we can derive the expanded term easily. This is because the negative of the log-likelihood function is minimized. The cross-entropy loss function is also termed a log loss function when considering logistic regression. ∂E/∂p i = ∂(- ∑)/∂p i Expanding the sum term The cross-entropy loss function is used as an optimization function to estimate parameters for logistic regression models or models which has softmax output. Let’s calculate these derivatives seperately.

We can apply chain rule to calculate the derivative. That’s why, we need to calculate the derivative of total error with respect to the each score. Cross entropy is applied to softmax applied probabilities and one hot encoded classes calculated second. Notice that we would apply softmax to calculated neural networks scores and probabilities first. PS: some sources might define the function as E = – ∑ c i . log(1 – p i)Ĭ refers to one hot encoded classes (or labels) whereas p refers to softmax applied probabilities. Things become more complex when error function is cross entropy.Į = – ∑ c i . If loss function were MSE, then its derivative would be easy (expected and predicted output). We need to know the derivative of loss function to back-propagate. Herein, cross entropy function correlate between probabilities and one hot encoded labels.Īpplying one hot encoding to probabilities Cross Entropy Error Function Finally, true labeled output would be predicted classification output. That’s why, softmax and one hot encoding would be applied respectively to neural networks output layer. Also, sum of outputs will always be equal to 1 when softmax is applied. After then, applying one hot encoding transforms outputs in binary form. entropyĪpplying softmax function normalizes outputs in scale of. Cross-Entropy gives a good measure of how effective each model is. Model A’s cross-entropy loss is 2.073 model B’s is 0.505. We would apply some additional steps to transform continuous results to exact classification results. Cross-entropy loss is the sum of the negative logarithm of predicted probabilities of each student. However, they do not have ability to produce exact outputs, they can only produce continuous results. We can represent this using set notation as ))$) is the information content of Q, but instead weighted by the distribution P.Neural networks produce multiple outputs in multi-class classification problems. To take a simple example – imagine we have an extremely unfair coin which, when flipped, has a 99% chance of landing heads and only 1% chance of landing tails. Information I in information theory is generally measured in bits, and can loosely, yet instructively, be defined as the amount of “surprise” arising from a given event. If you’re feeling a bit lost at this stage, don’t worry, things will become much clearer soon.Įager to build deep learning systems? Get the book here

As such, we first need to unpack what the term “information” means in an information theory context. Entropy is the average rate of information produced from a certain stochastic process (see here). This formulation of entropy is closely tied to the allied idea of information. However, for machine learning, we are more interested in the entropy as defined in information theory or Shannon entropy. The term entropy originated in statistical thermodynamics, which is a sub-domain of physics. For starters, let’s look at the concept of entropy. In this introduction, I’ll carefully unpack the concepts and mathematics behind entropy, cross entropy and a related concept, KL divergence, to give you a better foundational understanding of these important ideas. However, have you really understood what cross-entropy means? Do you know what entropy means, in the context of machine learning? If not, then this post is for you. If you’ve been involved with neural networks and have beeen using them for classification, you almost certainly will have used a cross entropy loss function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed